Learn how to build managed and unmanaged tables with PySpark and how effectively ... In particular data is written to the default Hive warehouse, that is set in the ... I am going to use Databricks File System to to simulate an external location .... Aug 26, 2019 — Each Databricks Workspace comes with a Hive Metastore automatically included. This provides ... using SQL. We can also create tables using Python, R and Scala as well. ... Let's build an external table on top of that location.. Nov 19, 2020 — Step 1: Show the CREATE TABLE statement · Step 2: Issue a CREATE EXTERNAL TABLE statement · Step 3: Issue SQL commands on your data.

Oct 13, 2020 — Databricks accepts either SQL syntax or HIVE syntax to create external tables. In this blog I will use the SQL syntax to create the tables.. Nov 19, 2020 — Step 2: Issue a CREATE EXTERNAL TABLE statement; Step 3: Issue SQL commands on your data. Step 1: Show the CREATE TABLE statement.. Lets you query data using JDBC/ODBC connectors from external business ... data set val csvFile = "/databricks-datasets/learning-spark-v2/flights/departuredelays.csv" ... metastore for Spark tables, Spark by default uses the Apache Hive metastore, ... Spark allows you to create two types of tables: managed and unmanaged.. from official docs ... make sure your s3/storage location path and schema (with respects to the file format [ TEXT, CSV, JSON, JDBC, PARQUET, ...Create External table in Azure databricks - Stack ...2 answers

databricks create external hive table

databricks create external hive table freemotion dual cable cross disassembly

Jun 11, 2021 — CREATE [ EXTERNAL ] TABLE [ IF NOT EXISTS ] table_identifier [ ( col_name1[:] col_type1 [ COMMENT col_comment1 ], ... ) ] [ COMMENT .... Shows how to use an External Hive (SQL Server) along with ADLS Gen 1 as part of a Databricks initialization script that runs when the cluster is created.. CREATE TABLE. December 22, 2020. Defines a table in an existing database. CREATE TABLE USING · CREATE TABLE with Hive format · CREATE TABLE .... Prepare a Parquet data directory val dataDir = "/tmp/parquet_data" spark.range(10).write.parquet(dataDir) // Create a Hive external Parquet table sql(s"CREATE .... HIVE is supported to create a Hive SerDe table. ... This clause automatically implies EXTERNAL . ... Create Table Using Delta (Delta Lake on Databricks). SQL. Parma vs Cagliari Streaming gratuito online

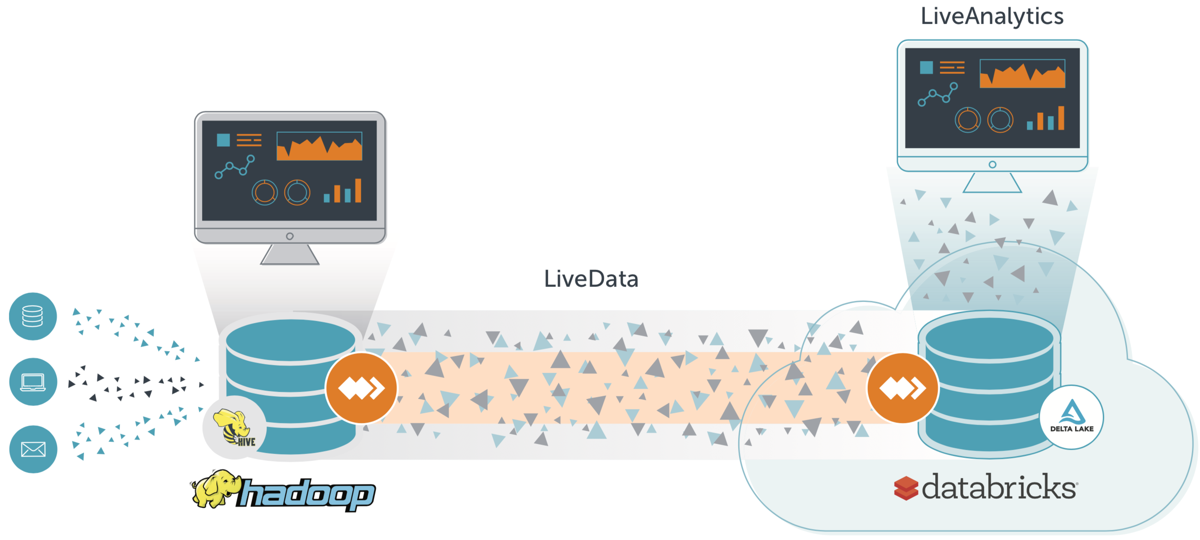

6 days ago — CREATE EXTERNAL TABLE. Was this helpful? CREATE EXTERNAL TABLE ... the format of the external data. Formats include: • avro. • csv. • hive. • jdbc ... For example, for Redshift it would be com.databricks.spark.redshift.. Subscription Bridge Specifications Vendor Databricks Tool Name Delta Lake Hadoop Hive ... A database connection can be explicitly split creating a new database ... but another process reads from a HIVE table implemented (external) by the .... ... all metadata from Hive: tables, partitions, statistics, columns names, datatypes, etc etc. Azure DataBricks can use an external metastore to use Spark-SQL and .... Create a table using the UI · Click Data Icon · In the Databases folder, select a database. · Above the Tables folder, click Create Table. Add Table Icon · Choose a ... аёЉаёІаёўаёІа№ЂаёЄаё§аёµаёўаё™аёаё№а№€.pdf - Google Drive

Differences Between Hive Tables and Snowflake External Tables ... Create a storage integration to access cloud storage locations referenced in Hive tables .... Feb 6, 2020 — Below are the Databricks cluster configuration properties for External ... Hive Metastore schema tables can be created automatically during .... Mar 16, 2021 — Let's first understand what is the use of creating a Delta table with Path. Using this, the Delta table will be an external table that means it will not .... May 21, 2020 — Importing data to Databricks: external tables and Delta Lake ... at two ways to achieve this: first we will load a dataset to Databricks File System (DBFS) and create an external table. ... This Delta Table was saved to Hive store:. 8d69782dd3 TTNAKED.COM - Misbella